Introducing Slashr: A Live Feed of Every Validator Incident

We Built a Live Feed of Every Validator Incident. Here's Why.

Validators go down. It happens constantly. A Solana validator misses votes for three hours. A Sui validator gets flagged by peers and forfeits an epoch of rewards. An Ethereum validator gets slashed. A Cosmos validator double-signs and loses real money.

This is not news. What's news is that almost nobody is watching it happen in real time, across chains, in one place.

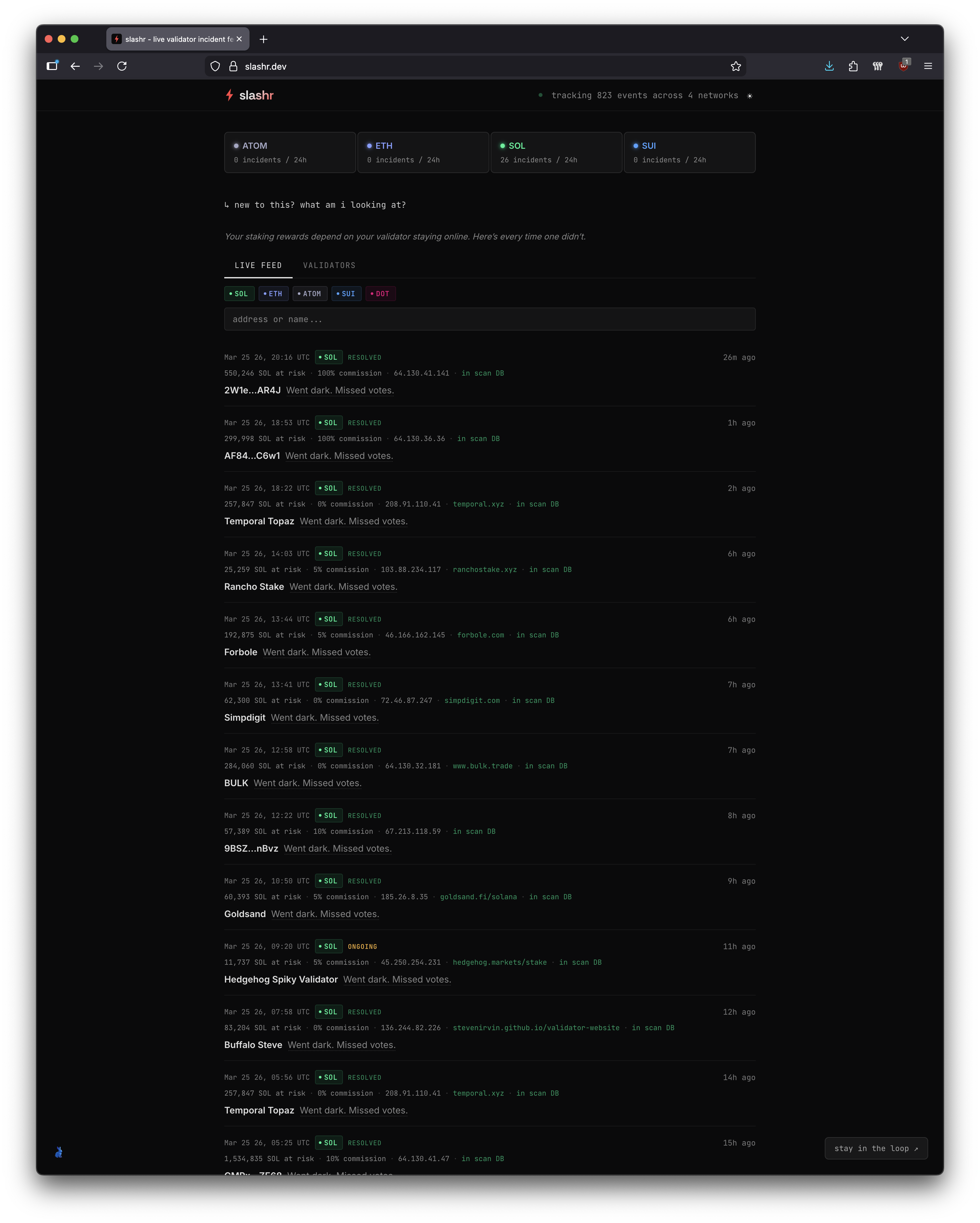

So we built one: slashr.dev.

What it does

Slashr is a live incident feed. Every time a validator goes dark, misses votes, gets slashed, or gets flagged by peers, it shows up. Right now we're tracking Solana, Ethereum, Sui, and Cosmos. DOT is coming.

Each incident shows the validator name, how much stake is at risk, their commission rate, their IP address, and whether the incident is ongoing or resolved. You can filter by chain or search for a specific validator by address or name.

That's it. No dashboards with seventeen tabs. No analytics suite. A feed.

Why it matters

If you're delegating stake to a validator, your rewards depend on that validator staying online. When they go dark, you lose money. Not theoretical money. Actual missed rewards, actual slashing penalties.

The problem is visibility. Most delegators have no idea when their validator has an incident until the rewards don't show up. By then it's over. The information exists on-chain, but nobody aggregates it across networks in a way that's useful in real time.

Slashr fixes that. Open a tab, leave it running, know what's happening.

The scanning connection

Here's where it gets interesting. Some incidents on the feed have an "in scan DB" link next to them. That link takes you to our scanning infrastructure, the same system we've been writing about in previous posts. The eBPF/XDP scanner, the timing fingerprints, the CVE analysis.

When a validator goes down, the question most people ask is "when will it come back?" The question we ask is "why did it go down, and what's actually running on that host?"

Because we've already scanned most of these validators. We know what ports they're exposing. We know what services are running that shouldn't be. We know which ones have unpatched CVEs sitting on internet-facing services. When a validator goes dark, we can cross-reference the incident against what we already know about its infrastructure.

A validator that went dark because of a routine restart is different from a validator that went dark while running an unpatched SSH daemon on a publicly accessible port. The incident feed tells you the first thing happened. The scan database tells you whether the second thing is also true.

How it works

The monitoring system behind slashr started as Ferret, a homegrown tool that watched on-chain state and fired Slack alerts when validators went dark. We found it so useful that we rebuilt the whole thing from scratch in Rust. The new backend watches on-chain state across multiple networks continuously. When it detects a delinquency, a missed vote run, a slashing event, or a peer flag, it records the event with full context: which validator, how much stake, what happened, when it started, when (if) it resolved.

Solana delinquencies are the noisiest. Validators go in and out of delinquency regularly. A validator with 11,737 SOL at risk going dark for twenty minutes is operationally different from one with 1.5 million SOL going dark for eleven hours. The feed shows both, because both matter to different people.

Sui and Cosmos are quieter but the consequences are harsher. Sui validators that get flagged by peers forfeit epoch rewards entirely. Cosmos validators that double-sign lose actual stake, not rewards, principal. Different failure modes, different severity, same feed.

What we're not doing

We're not scoring validators. We're not recommending where to delegate. We're not building a staking marketplace. There are plenty of those.

We're showing you what happened, when it happened, and if we've scanned the host, what the infrastructure looked like when it happened. That's the offering. Raw signal, no editorial.

What's next

DOT support is the next chain. Beyond that, we're working on alerting: the ability to watch a specific validator and get notified when something goes wrong. The data pipeline already supports it, it's mostly a notification delivery problem now.

The longer play is connecting the incident feed more deeply to the scanning infrastructure. Right now the "in scan DB" link is a reference. You can go look at the host's security posture separately. Eventually, when a validator goes dark, we want to automatically show what changed on that host in the hours before the incident. Did a new port open? Did the timing signature shift? Did something appear that wasn't there yesterday?

That's the gap between monitoring and understanding. The feed tells you something happened. The scanner tells you what was going on underneath. Connecting the two in real time is the actual product.

slashr.dev is live. Go have a look.

Related Posts

Slashr: Real-Time Validator Incident Tracking Across Four Networks

Slashr tracks validator delinquency, jailing, slashing, and missed votes across Solana, Ethereum, Sui, and Cosmos in real time. Wallet checks, rankings, automated scanning, and reliability reports -- all from on-chain data.

Connecting Slashr to Your AI Workflow via MCP

Slashr now has a Model Context Protocol server. Any MCP-compatible AI tool -- Claude Code, Claude Desktop, or custom agents -- can query live validator incident data, scan results, and network summaries directly.

DeFi Under the Microscope: 1,075 Hosts, 3,001 Ports, One Timing Scan

A first look at what DeFi validator infrastructure looks like at the kernel level. We crack open the consolidated dataset -- embedding galaxies, jitter fingerprints, RTT ridgelines, and 10,000 anomaly events across 642 silent hosts.